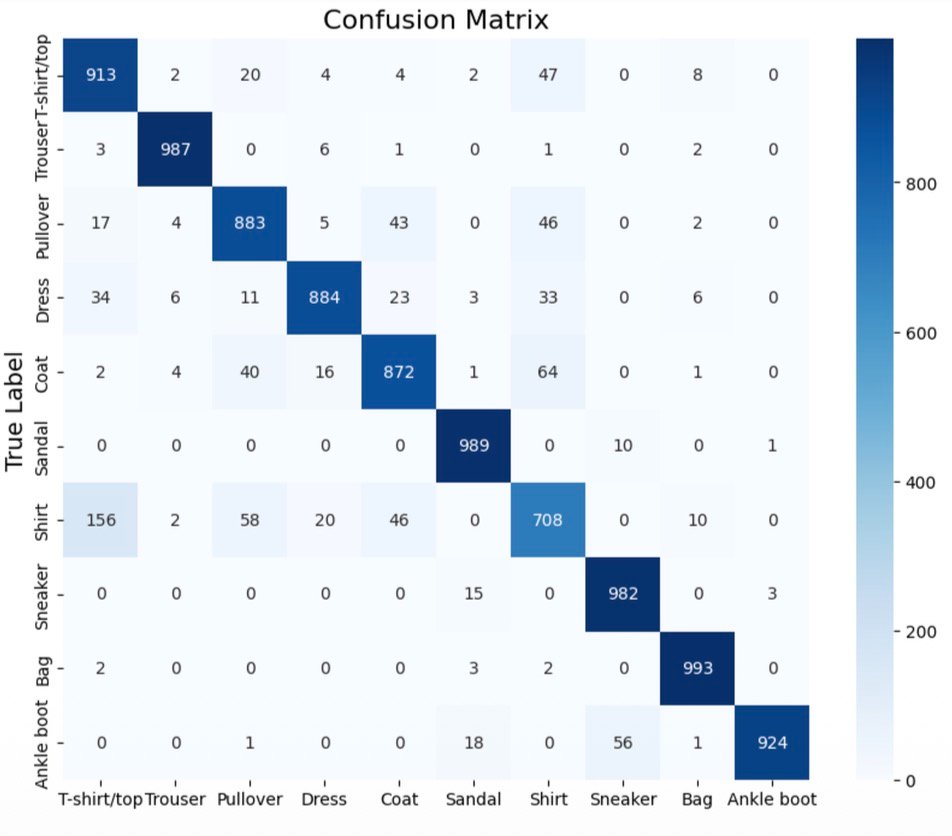

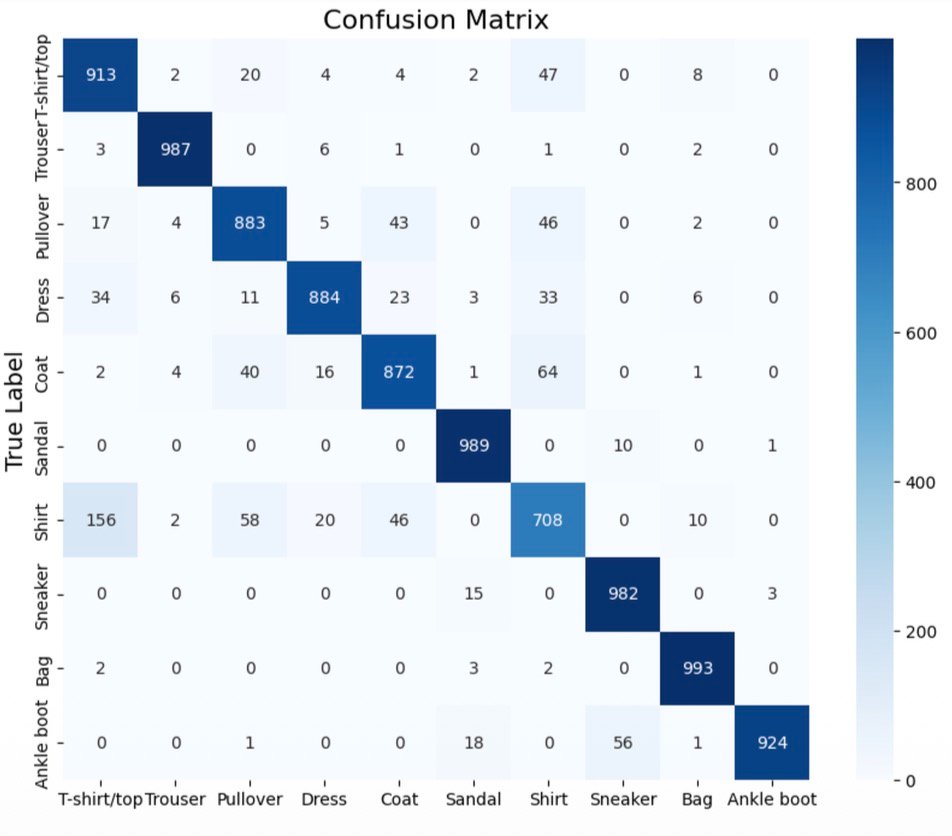

Confusion Matrix

ResNet predictions across all 10 categories (10,000 test samples)

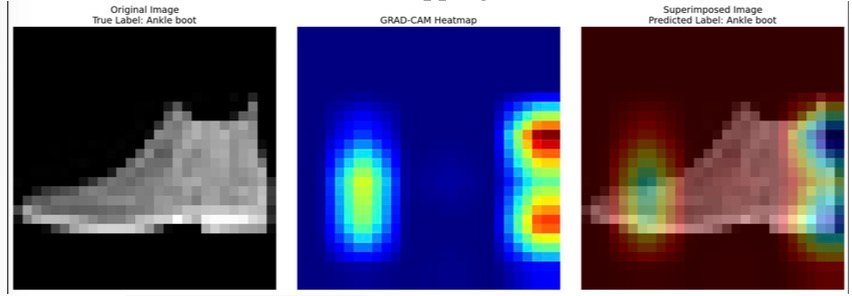

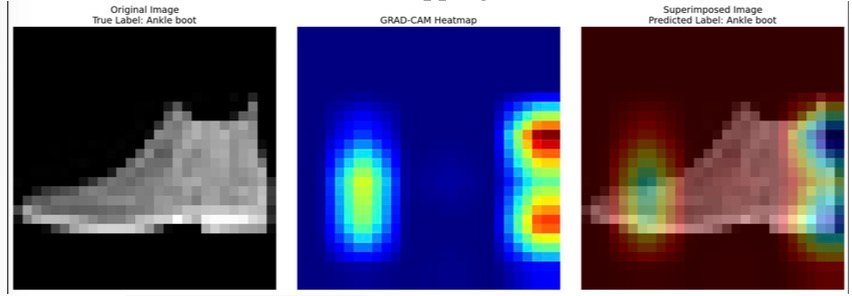

Grad-CAM Visualization

Gradient-weighted Class Activation Mapping — where the model looks to make decisions

A comparative study of CNN, ResNet, and traditional ML models on 70,000 fashion images — achieving 91% accuracy with interpretable predictions.

Residual Network with skip connections

Sequential convolutional network

ResNet predictions across all 10 categories (10,000 test samples)

Gradient-weighted Class Activation Mapping — where the model looks to make decisions